During the early years of industrialization, people used to measure things according to “the rule of thumb,” which meant a rough estimate of an expectation. In the early 1900s, F.W. Taylor came along and introduced scientific management, which signaled the evolution of performance cost measurement

Highlights of the Development and Changes in Performance Management

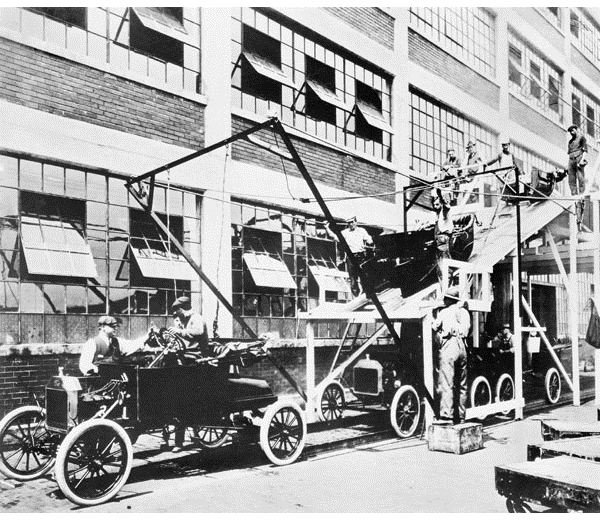

As industrialization entered the 20th century, Frederick W. Taylor saw the need for implementing standards or a uniform set of procedures. Taylor’s main focus was the productivity of the labor force that comprised mostly unskilled immigrants or field workers. Artisan or craftsman skills were relatively few because it took years of honing before skills could be mastered.

The proposition of the “Father of Scientific Management" was to ensure efficiency by simplifying jobs into a set of specialized sequences of motion instead of letting each worker decide on outcomes based on “the rule of thumb.” Back then, this was how operational activities were measured as efficient or productive.

It took about a century before the methods for evaluating performance took shape and form as a business management discipline. As changes in trends and operational environment took place, so did the methods by which business performance was gauged.

The following elements highlight the evolution of performance cost measurements from the 20th to the 21st centuries:

1. Establishing cost and its resulting benefits as the bases for measuring the productive use of financial and human resources.

2. Transforming the traditional budget into a tool for cost control and planning.

3. Developing management accounting and reportorial methods.

4. Establishing GAAP rules as a set of uniform accounting standards for measuring costs and profits.

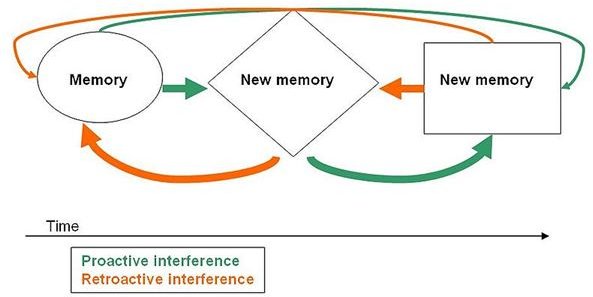

5. Incorporating the concept of proactive management.

6. The advent of social surveys as tools for Total Quality Management .

7. The formulation of corporate policies to define performance measurement of costs and profits.

8. The Balanced Scorecard.

9. The Business Intelligence applications and software.

Costs as the Basis for Measuring Productivity

In the early 19th century, excesses and inefficiencies leading to corruption and incompetence were commonplace in the public administration sector. The U.S. civil society, in its quest for a truly democratic form of government, saw the need to institute performance measures that would establish a government unit’s genuine commitment to uphold public interest.

A noted educator and political scientist in the person of Frank Goodnow established the framework for responsible and irresponsible governance. Professor Goodnow maintained that a higher authority should not be exempt from being held accountable in a democratic form of government; hence the elected or appointed leader of a government unit should be personally accountable for the decisions, acts and failures of the elements under his leadership.

Although this need was somehow met during the 1800s, measures were likewise based on “the rule of thumb.” It was only in 1906 that a set of standards for measuring productivity was conceptualized, which sprang from the principles of scientific management postulated by the above-referenced Frederick W. Taylor.

The Use of Budgets as Basis for Measuring Productivity

The New York Bureau of Municipal Research (NYBMR) created the first prototype for measuring the productivity of activities by focusing its attention on the traditional budget. Under the new system, each government unit or sector is controlled by a set of budgetary standards , which embody the following:

- The targeted goals,

- The limitations of the resources to use in achieving said goals, and

- The delivery of public service without going over the allocated costs.

Hence it came to be that the public budgeting system was incorporated with a systematic approach, first by requiring cost classifications. It also required narrative explanations and association of costs to the benefits generated to clearly depict how a unit would use up its resources in the performance of a specific public service.

The Executive Budget was later developed along with the promulgation of the “Budget and Accounting Act of 1921” which aimed to strengthen budget control. This was by giving the president of the United States neutral competence or the segregation of powers to decide beyond the constraints of the budget as a means for his administration to perform effectively. Costs that were intended as control measures were distinguished from costs that would be used for progressive initiatives. However, the chief executive likewise assumed overall command responsibility for the outcomes of his decisions.

One could perceive that budget controls could also result in a decrease of productivity if decisions were more inclined to aim at cost-cutting measures, and with fewer considerations for quality of output.

Nevertheless, the practice of instituting a budget became more than just a list of what was to be procured or paid out in order to carry out functions. Its use became widespread as a government application but was later adopted by industries as a tool for measuring the costs to be allocated. It later developed as a means for determining improvements or deviations that reflected how a unit used the resources in order to meet its targeted goals.

The Development of Management Accounting and Reportorial Procedures

One of the faults of the new budget system as a measurement of performance was the tendency to stagnate; industries and their managers had fewer initiatives to innovate.This gave rise to the concept of management accounting, which provided business owners information about product costs as further measure of stewardship on the part of the production managers.

Cost accounting systems were established as a means to allocate outlays intended for production, as expenses were further differentiated into:

- Cost of goods procured or manufactured,

- Cost of goods sold,

- cost of purchases that were still on hand, and

- Operational expenses.

Activity-based costing became the order of how funds should be allocated. The method allowed management to calculate the rate of returns on investments, the speed or rate by which inventories were converted into profit agents, and the actual liquidity of a company if debts and receivables were to be given considerations.

The objective was to distinguish cash outlays that basically generated the business profits and the expenses that were indirectly related to profit generation. That way, investors and creditors were provided with a clearer basis for making informed decisions on where to infuse or contribute their money.

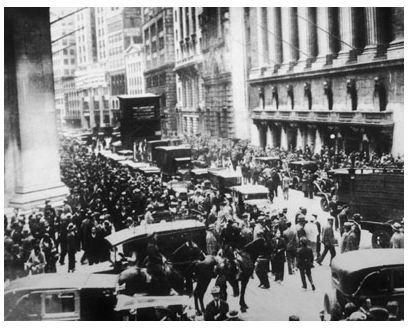

Financial reports presented factual figures of how company money was being spent. This kind of report presented a snapshot depiction of the entity’s potentials for generating higher yields, if speculators were to invest their money on the company as a short-term venture. Many prospectors became overnight financial successes as innovations sprang from different industrial sectors. Unfortunately, however, it turned out that some were merely “bubbles that were about to burst."

The Implementation of Generally Accepted Accounting Principles

As the turn of events had it, many emerging companies saw the simplicity of acquiring capital funds by simply providing the most enticing financial performance reports. Accounting methods and procedures were being used to cover up failed business ventures. This of course led to the “Stock Market Crash of 1929” and subsequently the “Great Depression.”

Its offshoot was the promulgation of the “Securities and Exchange Act of 1934” and the creation of the “Securities and Exchange Commission (SEC)”. The latter served as the overseer for monitoring the real condition and performance abilities of publicly traded companies. Back then, accountants provided their own justifications pertaining to methods of determining profits.

To address this issue, a standard of Generally Accepted Accounting Principles (GAAP) was established as the SEC’s founding guidelines. This is how GAAP rules became the set of scientifically conceptualized standards for recording and reporting business costs and revenues, for purposes of determining business profitability.

Throughout the years, performance measurement of costs and profits was perceived as a method implemented to increase competitiveness and the profitability of a business entity. Yet budget projections and productivity goals were mostly based on historical costs . Hence the initiatives taken were often regarded as reactive to what had transpired and the goals were mostly focused on short-term activities.

The Call for Proactive Management

.

During the ’70s era, U.S. firms were beginning to feel the impact of increasing competition coming from other international traders. China had transformed into becoming a “world factory” and was churning out processed foods, beverages, cosmetics and pharmaceuticals and a wide range of consumer products that somehow resembled popular U.S. and European brands. They might have been poor imitations but they were at least affordable. Japan, likewise, was making a mark for improving the quality of its products, particularly electronic devices and automobiles, which most consumers began to prefer for their innovative quality and affordability.

Most of the time, improving productivity in the U.S. meant reacting to customer’s complaints, and their efforts were still within the constraints of the budget. Most companies were more conscious of the financial results that would measure the effectiveness of their performance. A majority of the business goals were targeted at the capital markets, as a means to increase investors’ financial support.

Some of those who dared to venture saw failures, as other upcoming innovations rendered their products or services obsolete or they were disrupted by a more economical version of their innovation. In some instances, innovations were not fully exploited to their potential, which paved the way for other entrepreneurs to create improvements that made their products more attractive and sellable.

By 1980 to 1990, some unscrupulous executives deemed it best to simply manipulate their financial records and their financial reports, and the adverse impact was not felt until much later during the 2001-2002 bankruptcy scandals .

Please continue on page 2.

The Advent of TQM and Social Surveys

Total Quality Management (TQM) had been introduced by W. Edwards Deming during the 1920s. However, the concept of aligning process improvements with the concept of customer satisfaction was not so well received and seemed like a far-fetched idea–one could only make improvements once the product was already out in the market to determine the customer’s responses.

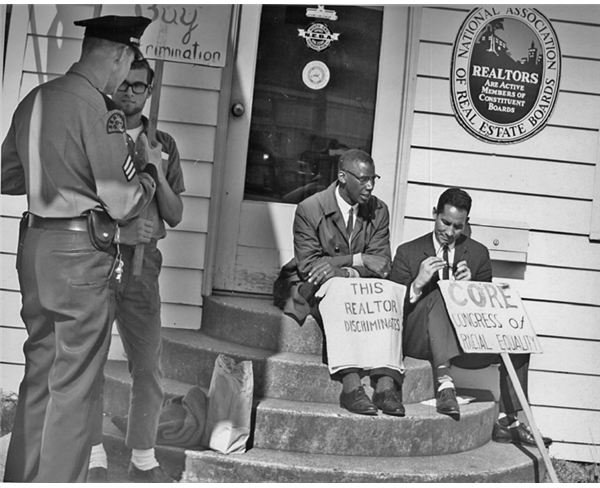

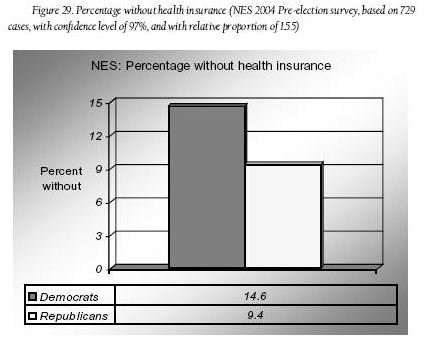

As the government turned to social surveys during the 1970s as its means for measuring the efficiency of public services rendered, TQM became a likely possibility. Social surveys provided information about demographics, opinions and a wide range of information of what the average customer wants. Data could further be streamlined according to gender, age, ethnicity, culture and educational levels. It became a proactive tool for performance cost measurement as customer feedback was more accurate and could be used as a basis for future innovations.

Conducting customer surveys furnished solid information that could be presented to shareholders and other key stakeholders to justify the feasibility of proposed business ventures and innovations. Improvements could be made not as a reactive behavior but instead be presented to customers as a form of innovative strategy.

The Need for Corporate Policies to Define Productivity Measurements

As business trends continued to develop, the concept of performance measurement has to have a clear definition of its basis and goals. It is no longer a matter of cost allocation and financial results that will please the investors. Advancements in technology have broadened the consumer markets, which also denotes increased competition and compliance with international standards.

The requirements have become complex and the basic criteria have become vague. Only a few are aware that it was a combination of philosophical teachings introduced in the early 1900s that were developed throughout the years by using cost as the basic point of comparison to determine productivity.

Advocates recommended defining performance measurement not by the governing theories but by considering a theory’s applicability to the business. Adopting a definition that clearly defines the behaviors, activities and goals within the organization will provide a more realistic concept, since managers and employees can easily relate to the strategies being used to achieve productivity goals.

Corporate policies should define their objectives for measuring the results of their performance. There should be clear delineation that the assessments are not only for determining the causes of financial variables but also of the non-financial aspects.

1. Financial measurements may be in any of the following forms:

Profit Margin as it measures pricing initiatives and how it can withstand the (1) rising costs of goods sold, (2) the increase or decline in demand, and (3) the overhead costs incurred in order to sustain the business.

Return on Assets (ROA), which was actually developed in 1919 by the Dupont Corporation, which indicates how well the company uses its capital assets.

Return on Equity (ROE), which was also conceptualized by Dupont, to provide the investors with information on how well the company is using its resources for the benefit of the investors.

Partial and Total Productivity, which measures the proportion of outputs against the resources used, i.e. capital, labor, energy or material.

Time-Based Productivity – This relates to considerations regarding the time spent to create value over the total time spent for production as a measure of efficiency. This also relates to the time spent for receivables to materialize as actual cash collections or time that stock purchases remain unsold.

Efficiency of Asset or Equipment Use – The objectives of evaluating the performance of equipment or machinery employed is to determine their full potential in generating the maximum level. Hence, values will be related to (a) the time spent in their uses, (b) the rate or speed by which production is completed, and (c) most importantly, the quality of the outputs produced.

2. Non-Financial Measurement of performance refers to the data that are being used to determine goals. Historical costs can no longer withstand the demands of a highly competitive economy that uses real-time information provided by computerized technology and the Internet:

- Reliability of internal data

- Objectivity of data that is based on observable opinions and not on subjective perception

- Benchmark references, as outcomes are compared against similar inputs and outputs of organizations within the same industry.

In considering all these, it is clear that the evaluation of performance has evolved beyond costs as the focal point in measuring productivity. Rather, it’s now aimed at ensuring that there is a balanced state of business conditions to ensure that all strategies being used are aligned with the business goals. However, the processes all seem too tedious and difficult to comprehend.

The Balanced Scorecard

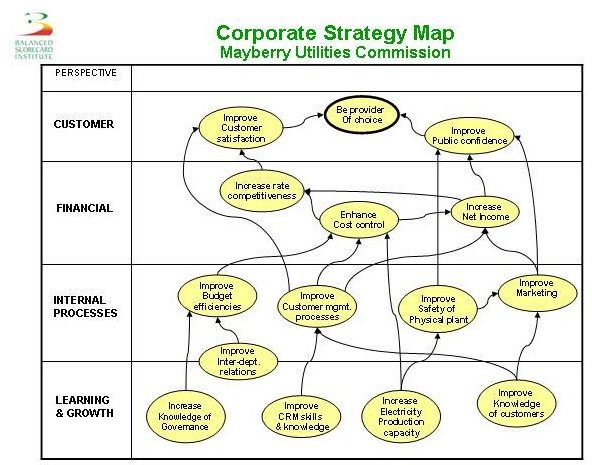

In 1992, the Balance Scorecard was introduced by Robert Kaplan and David P. Norton, which links a company’s financial performance and the customers’ satisfaction to the strategies employed.

Businesses have to establish and formulate their strategy/ies and periodically assess these strategies against the financial and non-financial outcomes. The results provide the foundations of new plans by performing a continuous review and developing new strategies for improvement. In using this tool, it is important that the organization adopt the following viewpoints:

(a) Employee knowledge and training must be aligned with how the strategies are to be carried out, while managers should give guides on where training costs are best invested to ensure continuous improvements.

(b) Internal business processes are evaluated based on how customers’ requirements are met.

(c) Innovations and improvements should be based on customers’ needs and expectations but should be analyzed according to the type of customers and the process that is most suitable based on the company’s resources.

(d) All such perspectives should be aligned with the company’s financial resources and should be backed by additional analyses, i.e. cost-to-benefit, risk assessments, EBITDA, and ROI as well as customer and employee retention rates.

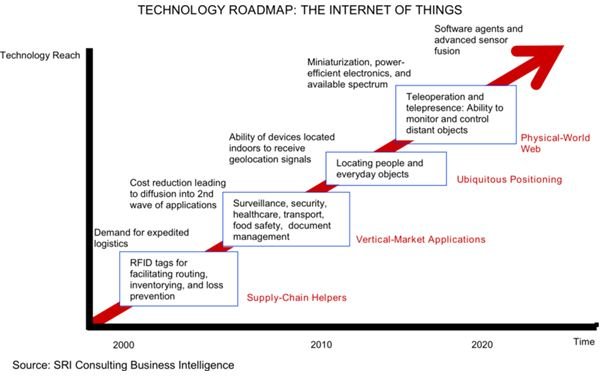

Business Intelligence Tools

As advancements in technology surged forward at the turn of 21st century, the availability of real-time information led to the discoveries of financial anomalies and bankruptcies. This signaled another round of stock market crashes and economic depression. Yet, picking up the pieces from where most businesses had left off also meant refocusing all strategies toward employee retention and customer satisfaction.

Today, measuring business productivity has finally become an organized system of analyzing not only the financial costs but also the non-financial data gathered as real-time inputs. Comprehend that business intelligence (BI) tools have data warehouses that collect and analyze information, which automatically and simultaneously cross reference all inputs gathered from every transaction of the business operations.

They can be programmed to generate reports that provide measures of business productivity using real-time information gathered from online resources and from internal time clocks or electronic timesheets, or asset-usage monitors that log the number of hours it was put into use and outputs generated, plus similar other monitoring tools.

In capping the evolution of performance cost measures in today’s highly advanced technological environment, it can be surmised that all productivity factors, measures and concerns have been captured in a business intelligence software or application. The only thing left for financial analysts to do is to evaluate the suitability of the BI to the business. Carefully considering the total cost of ownership and its projected benefits as well as the scalability of the software are important factors for evaluation.

References

- Image: Internet of Things By SRI Consulting Business Intelligence/National Intelligence Council / Wikimedia under public domain.

- Image: Fair housing protest, Seattle, Washington, 1964. Confronting racial discrimination in housing sales.Courtesy of Seattle Municipal Archives at Wikimedia CCA-SA 2.0 Generic

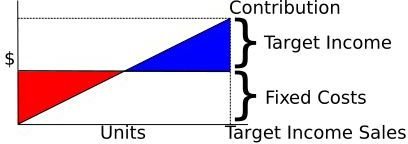

- Image: Cost-Volume-Profit diagram, showing how to compute Target Income Sales, dividing Fixed Costs plus Target Income by Unit Contribution. By Nils R. Barth / Wikimedia under public domain.

- Image: Depression-stock-market-crash-1929 By Freelancer Journalist / Wikimedia under public domain.

- Image: Assembly Line by User Samba1aa / Wikimedia Commons under public domain

- Evolution of Scientific Management Toward Performance Measurement - by Ratnayake, R.M. Chandima Assistant Professor (IKM)/ Doctoral Research Fellow (CIAM)Center for Industrial Asset Management (CIAM), Faculty of Science & Technology. University of Stavanger, Norway

- Image: Example of a balanced scorecard strategy map for a public-sector organization By Parveson / Wikimedia under public domain.

- Image: Percentage without health insurance By Joseph Fried / Wikimedia CCA-SA-3.0. Unported

- Image: Graph chart about proactive and retroactive memory interference By Aritter / Wikimedia CC-BY-SA-2.5,2.0,1.0.